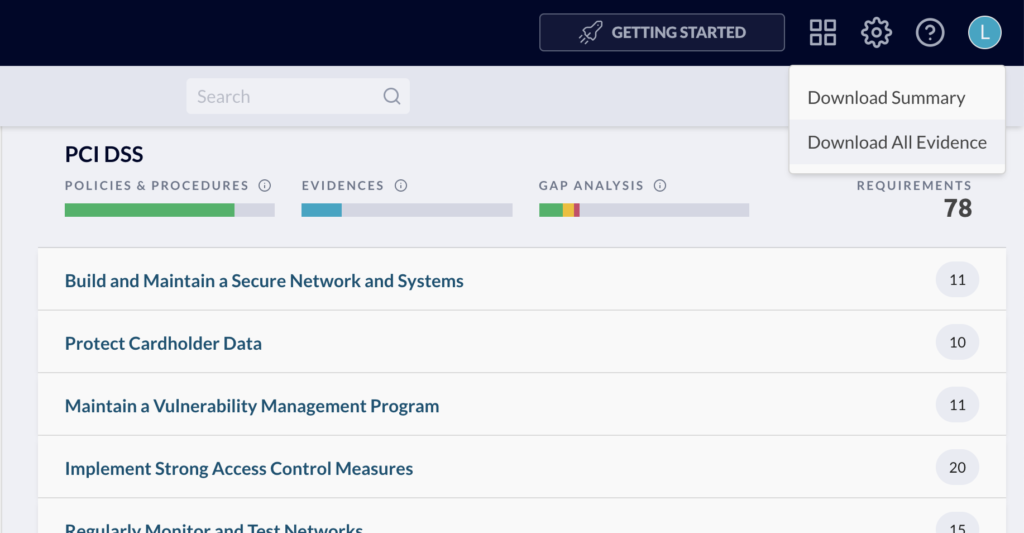

One of the newer features of JupiterOne is the ability to download all evidence for a compliance standard. This feature collects the compliance requirements, questions, query results, notes, and links into a single zip folder for presentation to an auditor.

As we move quickly at JupiterOne, engineering this feature for new and changing requirements allows fast, stable turnarounds for our customers. After the initial release of this feature, several additional features were required including a relevant policies and procedures, user-provided links, and a comprehensive summary file (CSV). Using a functional pipeline, we achieved both flexibility and speed from data retrieval to writing files.

This functional pipeline for building a zipped folder is broken into two primary smaller pipelines. The first pipeline retrieves the data from various sources –S3, DynamoDB tables, various GraphQL APIs, and the JupiterOne graph. The second pipeline evaluates, transforms, and writes results to the relevant files.

Data Pipeline

For the data pipeline, our goal was to create a chain-able dependency graph of the data to be retrieved. Fortunately, JavaScript's Promise enables this Monadic behavior with maximum concurrency.

As seen below, multiple calls to Promise.all allow independent operations to run concurrently and the dependent ones to operate serially. By crafting a consistent input structure that each function uses, we loosely tie these functions to the pipeline but allow them to be unit-tested individually.

Additionally, compliance evidence can actually be downloaded in two ways –one for the entire compliance standard and one for a single requirement. For an entire standard, the requirements and controls for every section are downloaded. Fundamentally, we want both entry points to always download the same types of data and never fall out of sync.

To achieve this structure, this top-level data pipeline remains constant and the individual promises conditionally handle whether a single requirement or an entire standard has been requested. Observe the uniform data structure returned by these functions as in the case of loadRequirements:

This uniform data output structure allows the data pipeline to easily plug into the second pipeline that evaluates, transforms, and writes the files. Note the output data structure.

Each data structures has a proper "empty" state that does not require explicit conditionals. For security items, 100 loaded values will be processed exactly the same as an empty array. For notes, the dictionary contains an array of loaded notes scoped to the requirement/control reference. If empty, the write operation for notes employs an empty array and does the same transforms regardless. Only the file reader needs a conditional check for writing non-empty files.

Consider just a few of the advantages of this data pipeline:

- Easily debuggable through unit-testing any section of the pipeline

- Optional continuation if certain pieces of data cannot be retrieved

- High concurrency even with chained dependencies

- Consistency between download types (entire standard and individual requirement)

- Completely flexible mix-and-match for writing files that may depend on multiple data sources

Just as with any approach, however, several trade-offs have been made.

- Not highest efficiency for exact format (i.e. more data may be in-memory than needed for each file)

- Slightly increased readability challenge for non-functional programmers due to conciseness

Write Pipeline

For the write step, the primary array is the list of requirements/controls (refs) seen in the output of loadInitialEvidenceData. Once the requested refs are retrieved, the second pipeline logic remains the same whether a single requirement or entire standard is being downloaded. The pipeline needs only the reference items and the relevant data. Note the same Promise structure of the pipeline.

Because the data is passed to each function, the logic for each one is typically quite simple. Observe the security items implementation that writes policies and procedures to a CSV file.

Not only has each segment of the pipeline become manageable, but each is also easy to test in isolation and quickly resolve any bugs.

Conclusion

Building software in a sustainable manner is not simply for long-established products. As seen in this feature, it has enabled us not only to have more predictable results but also quickly integrate new features as we quickly iterate on our product to provide the best experience for our customers. While not every new feature or bug fix can have this level of sophistication in a startup, knowing the trajectory of a feature can help engineers determine what abstractions are necessary and how many new or changing requirements may be imposed in the future.

For engineers, choosing and implementing abstractions must take into account business requirements. On the business-side, communicating the broader scope of a feature is not about boring your engineers but giving them context of what may come later or the scalability expected of the implementation. At JupiterOne, we intend to build a product that is reliable and valuable to our customers. Communication between engineers and business personnel is a crucial component of that goal.